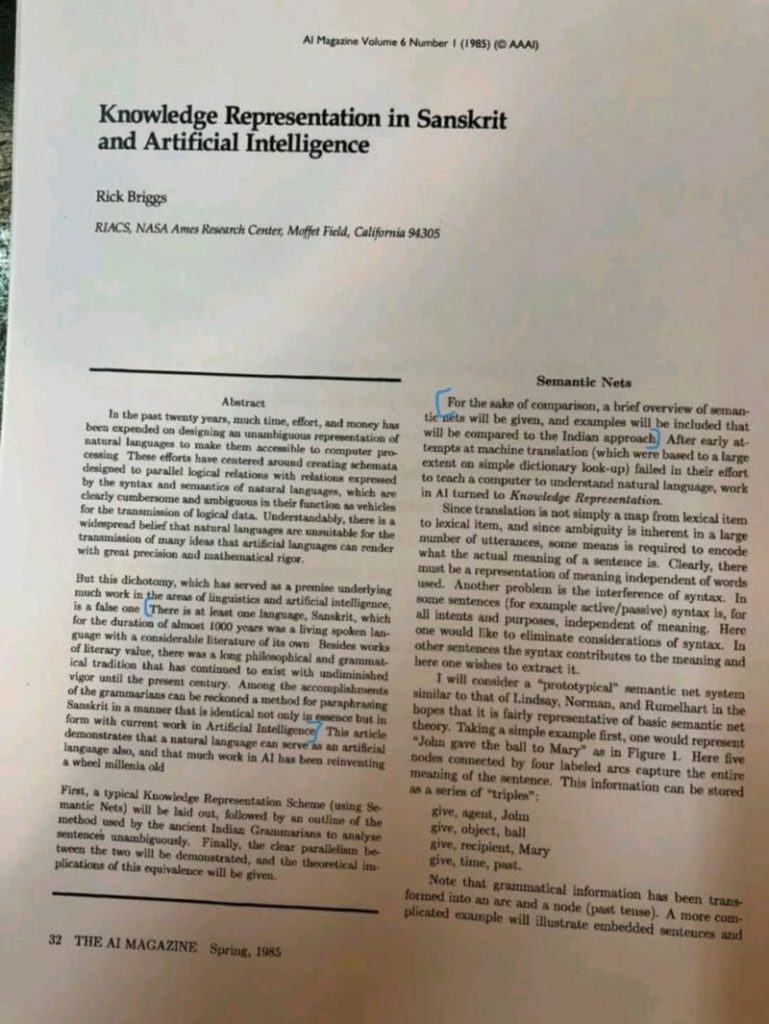

So , 2016 was year of chatbots . See the graphic at the end (its big image )and we see so many of the mainstream companies having built their bots . A github repo search will reveal similar story.The buzz that chatbots are creating is huge .So much so that people are claiming that chatbots will kill websites and mobile apps soon .We even notice that similar buzz was created a decade ago when mobile apps and app stores became mainstream .The mobile app wave was also looked at but incredulity so it is natural to be more welcoming towards chatbots wave .But the similarity does not hold beyond the English sentence you just read.

The move from website to mobile was actually reshaping of the form factor of the computing device .It is but natural ,albeit in hindsight ,that the content and its delivery fits itself into the new form.In the mobile wave also , the mobile and the app , were used interchangeably .While the mobile represents the shift , the app merely represents engagement .This difference is vital to analyzing the chatbot buzz .

Matt Schlicht,of the Chatbot Magazine defines,“A chatbot is a service powered by rules that a user interacts with using a chat interface. The service can be any number of things, ranging from form to function and live in any major chat product” . One might agree with this definition as is or diff with it in parts .While chatting itself is not a new paradigm nor is the concept of a daemon processing accomplishing the task , it is the combination of a always running agent , the bot facilitating the chat.The daemon could assigning your chat requests to human beings ,in a typical support center scenario .It might be reading your sentences and applying some pattern rules to serve reconfigured response .And because the state of the commoditized art allows us to program interpret ,human sentences (either typed or spoken), the so called NLP engine can be plugged into the chat bot to enhance the precision to the intent inference .

Most of the NLP styled chatbot guides mention NLP and intent-action mapping in the same breath ,but this is false connection .Intent-action mapping is what it is ,whether the inference is made via NLP,regex or rulesets. One might also throw machine learning in the stack to further enhance the inference via correlation and other techniques .Well that’s the short summary of what we get to read in the chat bot buzz as the first thing. What goes missing many times is the true nature of the chatbot for the end user , i.e. the conversational quality .

It is this conversational quality of the interaction , that needs deeper scrutiny ,because it represents an alternative to the laid out quality of our interfaces .

Its fine to say that Language is the most natural interface humans understand, and that’s the interface that bots use.but to miss that human language has an intent-explain-infer-confirm cycle embedded into it .

This model is very powerful the “range” of the expression is huge , like asking for a qualified advice amidst multiple factors .But it is very lengthy when the expressions are straight forward .

A typical chatbot sample told to us will be either a flightbooking bot, a billpay bot or an e-commerce bot.All these interactions are well modeled to human mind and the laidout-selection model fits better there .Infact it is an liberal model (read open) for both the parties to explore more options alongside the intended interaction.

Where as a personal assistant that can suggest a song based on the whether condition,travel duration,earlier playlist usage and so on , is the right case for chat (voice or test of gesture) to digest the complexity of intent-explain-infer-confirm cycle .

Thus the argument that because the end user are moving and are more and more available on chat platform and hence the business process should also move to them will add to chat fatigue once the novelty fades out .

We need to trust the end use to be able to decide on more convenient and time efficient model of interaction and offer them.Hence the conversational quality , used at qualified places while retaining the laid-out quality of presented content is the right blend .A jump into chat via bot will be shortlived for most mainstream businesses .Infact the power of ML or AI ,as we like,implies that the business understand and serve (ready to serve) us better even before we start interacting .To transport this whole responsibility onto AI assisted chat interfaces via chatbots is laziness .

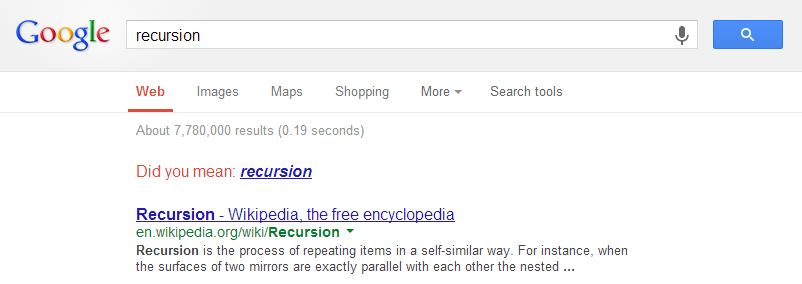

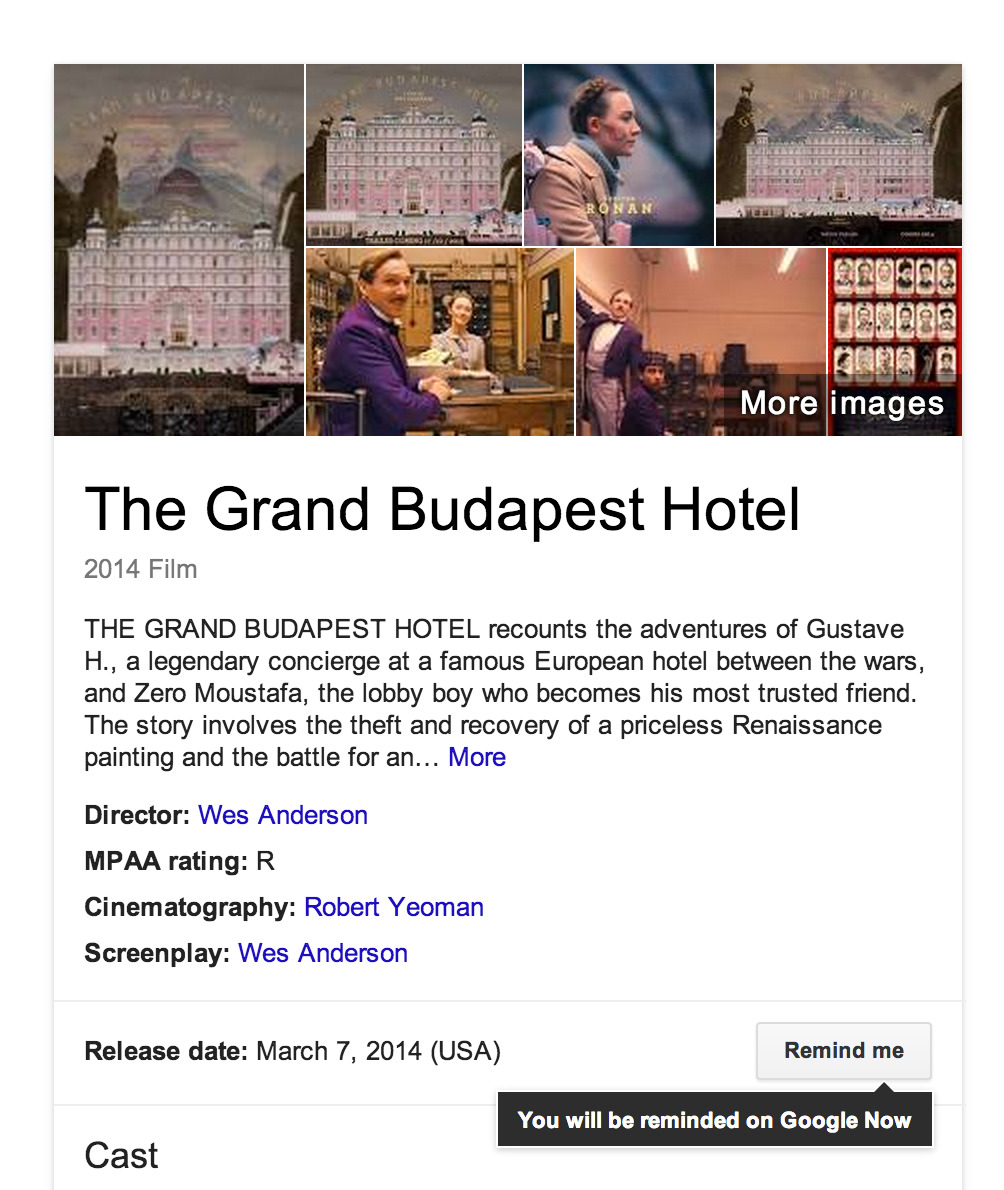

In the end , there is a Google search experience to rescue.It lays out a nice search box for us to express , then does huge work in the background to make best sense of what we intended yet subtly suggest us alternatives and corrections ,like human conversation,if the confidence in the inference it made wasn’t high .

It is good case of fitment driven software than buzz driven software ..and that’s the case against chatbot , AI or no AI.

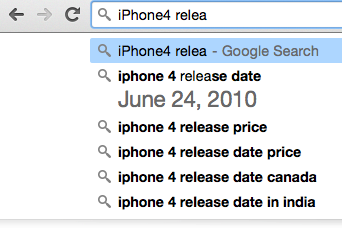

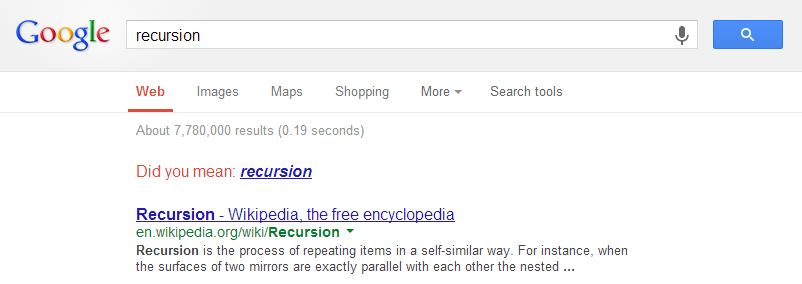

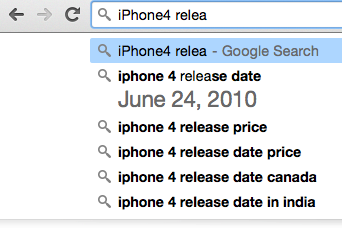

Here are some samples of how subtly google lays out the conversations , courtesy: littlebigdetails .

1. If you search the word “recursion” in Google, it’ll suggest “recursion”. If you click on the suggestion, it’ll suggest “recursion” again… creating a recursive search.And don’t miss the spell correction prompt “did you mean”

2.Google Chrome – Displays some search results in the suggested input area

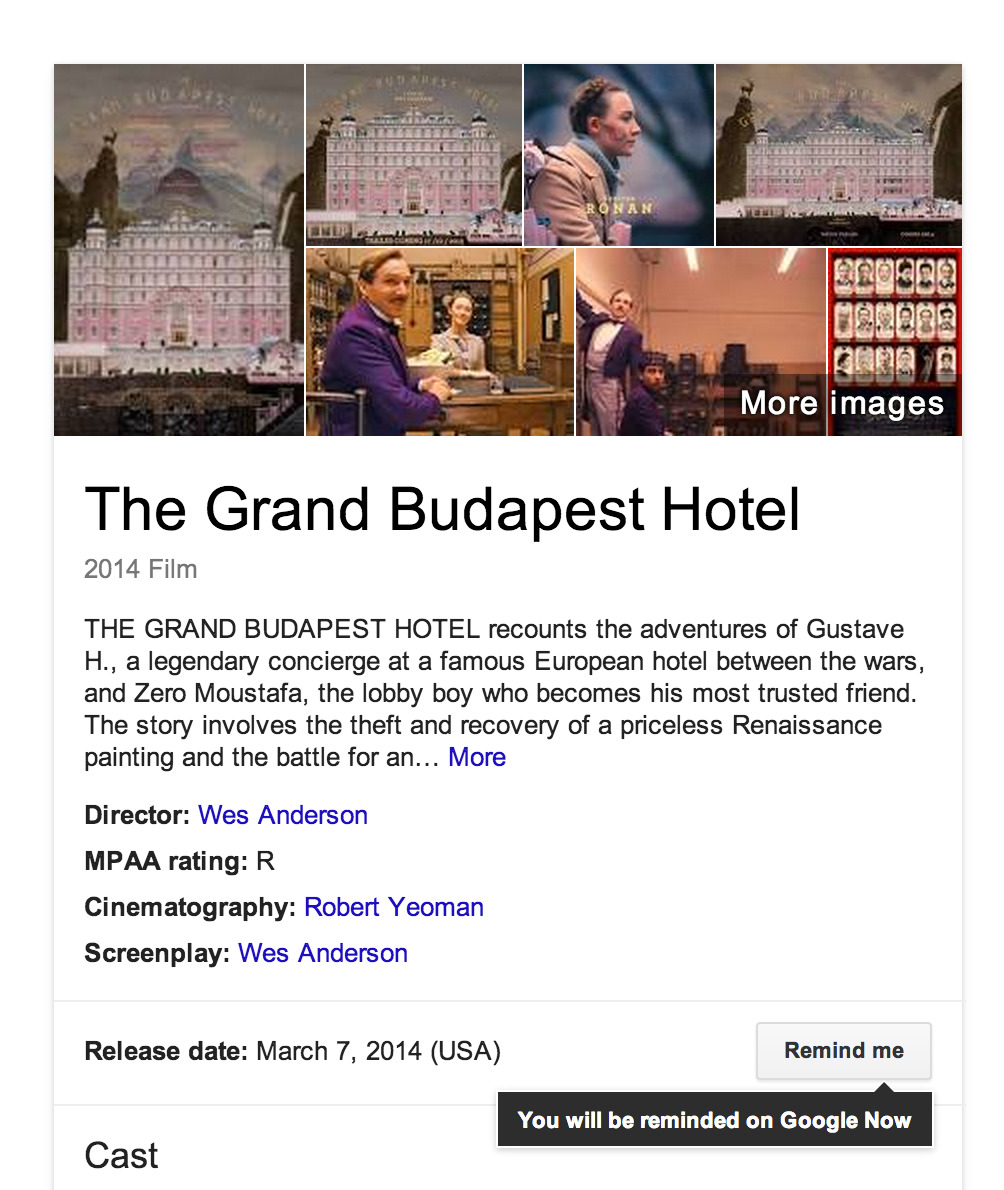

3.When searching for an upcoming movie, the Knowledge Graph box shows the release date and asks if you’d like to create a reminder.

4.The Oreily report :

Bot Landscape,Oreily